Databricks → Snowflake migration

Move Databricks workloads (Spark SQL, notebooks, Delta Lake tables, jobs, and streaming pipelines) to Snowflake with predictable conversion and verified parity. SmartMigrate makes semantic and operational differences explicit, produces reconciliation evidence you can sign off on, and gates cutover with rollback-ready criteria—so production outcomes are backed by proof, not optimism.

- Scope

- Query and schema conversion

- Semantic and type alignment

- Validation and cutover readiness

- Risk areas

- Deliverables

- Prioritized execution plan

- Parity evidence and variance log

- Rollback-ready cutover criteria

Is this migration right for you?

- You run production analytics or lakehouse workloads on Databricks (SQL Warehouses + Spark jobs/notebooks).

- You have Delta Lake as a canonical storage layer and need a Snowflake-native contract for storage, governance, and consumption.

- You operate jobs with dependencies (multi-task workflows), shared libraries, parameterized notebooks, and SLAs.

- You have streaming or incremental pipelines (Auto Loader, Structured Streaming, MERGE/upserts) where correctness must be provable.

- You need objective cutover sign-off (golden queries + thresholds, reconciliation evidence, rollback gates) and cost/performance predictability

- You’re doing a one-time export/import with no ongoing transformations, no query parity requirement, and no operational SLAs.

- Your Databricks usage is purely exploratory with no standardized jobs, schemas, or consumers.

- You can tolerate “close enough” outputs and don’t need reconciliation evidence.

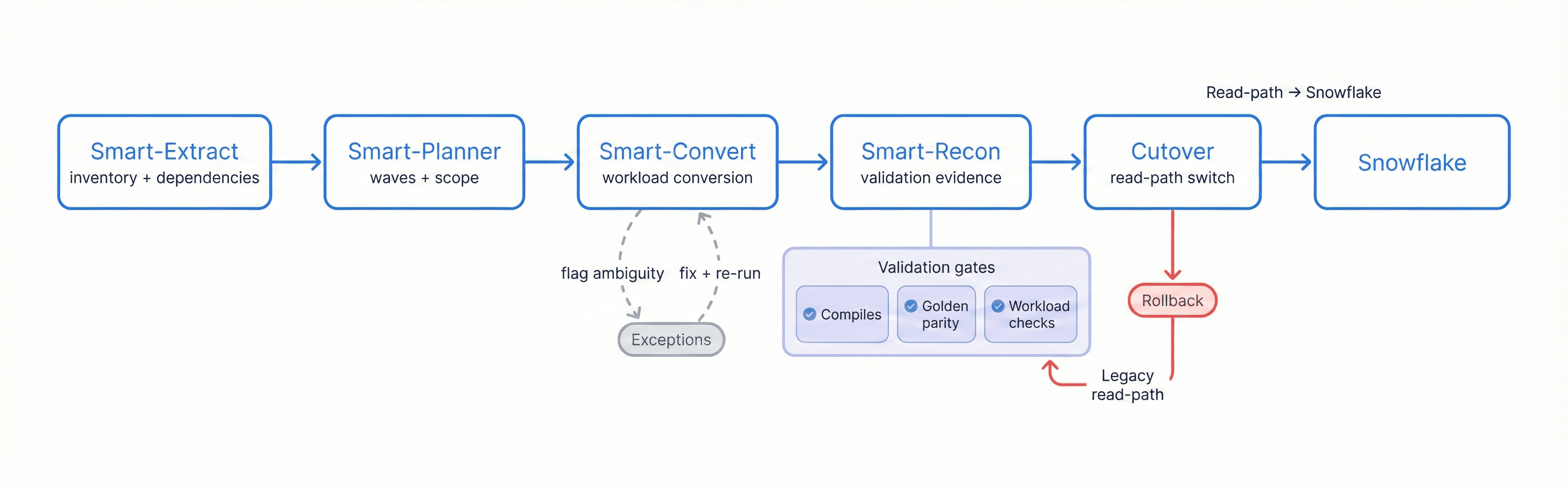

Migration Flow

Extract → Plan → Convert → Reconcile → Cutover to Snowflake, with exception handling, validation gates, and a rollback path

SQL & Workload Conversion Overview

Databricks → Snowflake migration is not just “SQL translation.” It’s a platform shift: Spark’s execution model, notebooks-as-code, and Delta Lake semantics must be re-homed into Snowflake’s warehouse-centric compute model, SQL semantics, governance, and operational patterns.

SmartMigrate converts what is deterministic, flags ambiguity, and structures the remaining work so engineering teams can resolve exceptions quickly.

What we automate vs. what we flag:

- Automated:

- Inventory and dependency graphing of notebooks, jobs/workflows, SQL assets, and table lineage

- Mapping common Spark SQL patterns to Snowflake SQL (safe function rewrites, routine DDL, straightforward joins/aggregations)

- Converting basic Delta table schemas into Snowflake tables with consistent naming/typing

- Scaffolding ingestion patterns (staging → load) and standard ELT transforms where patterns are deterministic

- Flagged as “review required”:

- Spark-specific functions/semantics (arrays/maps/structs, higher-order functions, explode/posexplode patterns)

- Timezone/timestamp edge cases and implicit casts/NULL-sensitive logic

- Complex UDFs (Scala/Python), custom libraries, and notebook side effects (writes, temp views, caching)

- MERGE/upsert semantics and CDC behavior (late-arriving data, dedupe rules)

- Streaming jobs (Structured Streaming state, watermarking, exactly-once expectations)

- Performance-sensitive query shapes and large shuffle-heavy transforms

- Manual by design:

- Final architecture choices: batch vs near-real-time, Snowflake-native ingestion tooling, orchestration pattern

- Warehouse strategy (sizing, auto-suspend/resume, workload isolation, multi-cluster)

- Modeling strategy (dbt/Dataform-style ELT, incremental models, snapshotting)

- Replacement plan for non-SQL code paths (UDFs, Python/Scala transforms) and job runtime semantics

Common Failure Modes

- Delta Lake semantics assumed to carry overFeatures like ACID MERGE patterns, schema evolution behaviors, and “read latest version” expectations create correctness drift if re-implemented implicitly.

- Spark SQL ≠ Snowflake SQL in semi-structured logicArray/map/struct-heavy transformations translate syntactically but differ in nullability, ordering, and edge-case outputs.

- Notebook side effects and hidden stateNotebooks that rely on temp views, caching, implicit globals, or driver-side logic behave differently when moved into pure SQL/ELT form.

- Streaming state and watermarking mismatchStructured Streaming jobs with stateful aggregations and watermark-based lateness handling don’t map 1:1; “near-real-time” becomes “wrong-real-time” without an explicit design.

- MERGE/upsert drift and dedupe gapsIncremental pipelines lose dedupe/late-data correction rules, causing duplicates, missing updates, or inflated KPIs

- Cost model whiplashSpark cluster costs (and job runtimes) don’t translate directly to Snowflake credits; warehouse sizing, concurrency bursts, and long-running transforms can surprise teams.

- Performance whiplash from shuffle-heavy transformsSpark’s distributed execution patterns can hide expensive reshapes. When moved to Snowflake, large intermediates and unpruned joins become slow/expensive unless query shapes are redesigned.

- Governance and access patterns missedUnity Catalog / workspace permissions don’t map directly to Snowflake RBAC, warehouses, resource monitors, and data sharing patterns.

Validation & Reconciliation Summary

In a Databricks → Snowflake migration, success must be measurable. We validate correctness in layers: first ensuring translated workloads compile and execute reliably, then proving outputs match expected business meaning via reconciliation.

Validation is driven by pre-agreed thresholds and a defined set of golden queries and datasets. This makes sign-off objective: when reconciliation passes, cutover is controlled; when it fails, you get a precise delta report pinpointing where semantics, type mapping, incremental logic, or pipeline behavior needs adjustment.

Checks included (typical set):

- Row counts by table and key partitions where applicable

- Null distribution + basic profiling (min/max, distinct counts where appropriate)

- Checksums/hashes for stable subsets where feasible

- Aggregate comparisons by key dimensions (day, region, customer/product keys)

- Sampling diffs: edge partitions, late-arrival windows, known corner cases

- Query result parity for golden queries (dashboards, KPI queries, model outputs)

- Incremental correctness checks (upsert/merge idempotency, dedupe rules)

- Post-cutover monitoring plan (latency, credit burn, failures, concurrency/warehouse saturation)

Performance & Optimization Considerations

- Workload isolation by warehouse:

- Separate BI, ELT, and ad-hoc exploration to avoid contention and unpredictable latency.

- Warehouse sizing + auto-suspend discipline:

- Right-size for peak needs; prevent idle credit burn.

- Pruning-aware table design:

- Align ingest patterns and dominant filters so micro-partition pruning works for real queries.

- Selective clustering (when it pays):

- Apply clustering keys only to tables where repeated hot queries benefit materially.

- Materialized views and summary tables:

- Stabilize BI workloads and reduce repeated heavy transforms.

- Incremental modeling strategy:

- Prefer incremental ELT patterns that avoid full reprocessing; design for late-arriving data.

- Semi-structured performance:

- Optimize VARIANT access patterns, flattening strategy, and avoid repeated heavy JSON parsing.

- Query plan observability:

- Use Snowflake query history/profiles to detect regressions and validate tuning outcomes.

- Join strategy tuning:

- Reduce large intermediates by managing join cardinality, filters, and spill-prone patterns.

Databricks → Snowflake migration checklist

- Parity contract existsDo you have signed-off golden queries/reports + thresholds (including semi-structured and time edge cases) before conversion starts?

- Asset inventory is completeHave you inventoried notebooks, jobs/workflows, SQL assets, libraries, and downstream consumers (BI + ML features) so nothing is “surprised” at cutover?

- Delta Lake behavior is mapped intentionallyHave you identified where Delta features are relied on (MERGE, schema evolution, time travel expectations) and defined Snowflake equivalents?

- Incremental + CDC semantics are explicitDo you know which pipelines rely on upserts/dedupes/late-data corrections, and have you defined Snowflake patterns (staging + MERGE, idempotency rules)?

- Streaming/near-real-time is designed, not assumedIf you have streaming, have you decided how to replicate state, watermarking semantics, and operational SLOs (or re-scope to micro-batch)?

- Non-SQL code paths are in scopeHave you cataloged UDFs, Python/Scala transforms, and custom libraries—and decided how each will be replaced (SQL, external functions, pipeline transforms, or retirement)?

- Cutover is rollback-safe under real concurrency and costParallel run + canary gates + rollback criteria + Snowflake guardrails (credits, warehouse saturation, query latency, failure rates) are ready.

Frequently asked questions

What are the biggest differences between Databricks and Snowflake for analytics workloads? +

How do you migrate notebooks and jobs—not just SQL? +

What happens to Delta Lake MERGE and schema evolution behavior? +

How do you validate results are correct after conversion? +

How do you estimate Snowflake cost after migration? +

Can we migrate with minimal downtime? +

Get a migration plan you can execute—with validation built in. We’ll inventory your Databricks estate (notebooks, jobs/workflows, Delta tables, streaming/incremental pipelines, and custom libraries), convert representative workloads, surface risks in SQL translation and semantic mapping, and define a validation and reconciliation approach tied to your SLAs. You’ll also receive an ingestion and modeling plan, a cutover plan with rollback criteria, and performance optimization guidance for Snowflake.